Publishing research articles is the bedrock of science.

Knowledge advances through testing hypotheses, and the only way such advances

are communicated to the broader community of scientists is by writing up the

results in a report and sending it to a peer-reviewed journal. The assumption

is that papers passing through this review filter report robust and solid

science.

Of course this is not always the case. Many papers include

questionable methodology and data, or are poorly analyzed. And a small minority

actually fabricate or misrepresent data. As Retraction Watch often reminds us,

we need to be vigilant against bad science creeping into the published

literature.

Why should we care about bad science? Erroneous results or incorrect

conclusions in scientific papers can lead other researchers astray and result

in bad policy. Take for example the well-flogged Andrew Wakefield, a since

discredited researcher who published a paper linking autism to vaccines. The

paper is so flawed that it does not stand up to basic scrutiny and was rightly

retracted (though how it could have passed through peer review is an astounding

mystery). However, this incredibly bad science invigorated an anti-vaccine movement in Europe and North America that is responsible for the re-emergence of childhood diseases that should have been eradicated. This bad science is

responsible for hundreds of deaths.

|

| From Huffington Post |

Of course most bad science will not result in death. But bad

articles waste time and money if researchers go down blind alleys or work to

rebut papers. The important thing is that there are avenues available to

researchers to question and criticize published work. Now days this usually

means that papers are criticized through two channels. First is through blogs

(and other social media). Researchers can communicate their concerns and

opinion about a paper to the audience that reads their blog or through social

media shares. A classic example was the blog post by Rosie Redfield criticizing a paper published in Science that claimed to have discovered bacteria that used arsenic as a food source.

However, there are a few problems with this avenue. First is

that it is not clear that the correct audience is being targeted. For example,

if you normally blog about your cat, and your blog followers are fellow cat

lovers, then a seemingly random post about a bad paper will likely fall on deaf

ears. Secondly, the authors of the original paper may not see your critique and

do not have a fair opportunity to rebut your claims. Finally, your criticism is

not peer-reviewed and so flaws or misunderstandings in your writing are less

likely to be caught.

Unlike the relatively new blog medium, the second option is

as old as scientific publication –writing a commentary that is published in the

same journal (and often with an opportunity for the authors of the original

article to respond). These commentaries are usually reviewed and target the

correct audience, namely the scientific community that reads the journal.

However, some journals do not have a commentary section and so this

avenue is not available to researchers.

Caroline and I experienced this recently when we enquired

about the possibility to write a commentary on an article was published and

that contained flawed analyses. The Editor responded that they do not publish

commentaries on their papers! I am an Editor-in-Chief and I routinely deal with

letters sent to me that criticize papers we publish. This is important part of

the scientific process. We investigate all claims of error or wrongdoing and if

their concerns appear valid, and do not meet the threshold for a retraction, we

invite them to write a commentary (and invite the original authors to write a

response). This option is so critical to science that it cannot be overstated.

Bad science needs to be criticized and the broader community of scientists

should to feel like they have opportunities to check and critique publications.

I could perceive that there are many reasons why a journal

might not bother with commentaries –to save page space for articles, they’re

seen as petty squabbles, etc. but I would argue that scientific journals have

important responsibilities to the research community and one of them must be to

hold the papers they publish accountable and allow for sound and reasoned

criticism of potentially flawed papers.

Looking over the author guidelines of the 40 main ecology and evolution journals (and apologies if I missed statements -author guidelines can be very verbose), only 24 had a clear statement about publishing commentaries on previously published papers. While they all had differing names for these commentary type articles, they all clearly spelled out that there was a set of guidelines to publish a critique of an article and how they handle it. I call these 'Group A' journals. The Group A journals hold peer critique after publication as an important part of their publishing philosophy and should be seen as having a higher ethical standard.

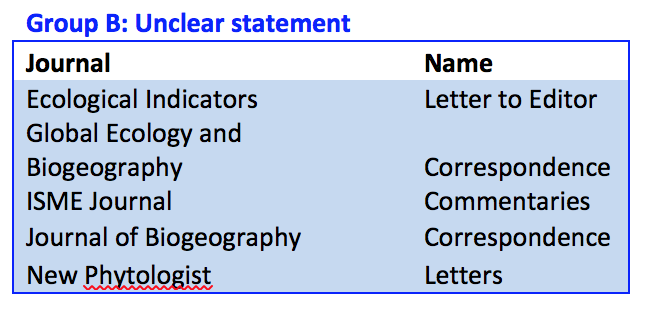

Next are the 'Group B' journals. These five journals had unclear statements about publishing commentaries of previously published papers, but they appeared to have article types that could be used for commentary and critique. It could very well be that these journals do welcome critiques of papers, but they need to clearly state this.

The final class, 'Group C' journals did not have any clear statements about welcoming commentaries or critiques. These 11 journals might accept critiques, but they did not say so. Further, there was no indication of an article type that would allow commentary on previously published material. If these journals do not allow commentary, I would argue that they should re-evaluate their publishing philosophy. A journal that did away with peer-review would be rightly ostracized and seen as not a fully scientific journal and I believe that post publication criticism is just as essential as peer review.

I highlight the differences in journals not to shame specific journals, but rather highlight that we need a set of universal standards to guide all journals. Most journals now adhere to a set of standards for data accessibility and competing interest statements, and I think that they should also feel pressured into accepting a standardized set of protocols to deal with post-publication criticism.

Looking over the author guidelines of the 40 main ecology and evolution journals (and apologies if I missed statements -author guidelines can be very verbose), only 24 had a clear statement about publishing commentaries on previously published papers. While they all had differing names for these commentary type articles, they all clearly spelled out that there was a set of guidelines to publish a critique of an article and how they handle it. I call these 'Group A' journals. The Group A journals hold peer critique after publication as an important part of their publishing philosophy and should be seen as having a higher ethical standard.

Next are the 'Group B' journals. These five journals had unclear statements about publishing commentaries of previously published papers, but they appeared to have article types that could be used for commentary and critique. It could very well be that these journals do welcome critiques of papers, but they need to clearly state this.

The final class, 'Group C' journals did not have any clear statements about welcoming commentaries or critiques. These 11 journals might accept critiques, but they did not say so. Further, there was no indication of an article type that would allow commentary on previously published material. If these journals do not allow commentary, I would argue that they should re-evaluate their publishing philosophy. A journal that did away with peer-review would be rightly ostracized and seen as not a fully scientific journal and I believe that post publication criticism is just as essential as peer review.

I highlight the differences in journals not to shame specific journals, but rather highlight that we need a set of universal standards to guide all journals. Most journals now adhere to a set of standards for data accessibility and competing interest statements, and I think that they should also feel pressured into accepting a standardized set of protocols to deal with post-publication criticism.